Picture this: it’s 2019, and a mid-sized fintech startup just deployed their shiny new monolithic application to a single beefy server. Six months later, Black Friday traffic hits, the server chokes, and they’re staring at $2.3 million in lost transactions over a four-hour outage. Fast forward to today — that same company rebuilt everything from scratch using cloud native principles and now handles 40x the peak load with zero planned downtime. I’ve watched this story play out dozens of times, and every single time, the turning point was the same: someone finally sat down and asked, “What does cloud native actually mean at the architecture level?”

Let’s think through this together, because the phrase “cloud native” gets thrown around so casually in 2026 that it’s started to lose meaning. It’s not just about running containers on Kubernetes. It’s a fundamentally different philosophy about how software should be designed, built, and operated.

What Cloud Native Architecture Actually Means (Beyond the Buzzword)

The Cloud Native Computing Foundation (CNCF) defines cloud native systems as those that are scalable, resilient, manageable, and observable — deployed in modern dynamic environments like public, private, and hybrid clouds. But let’s unpack what that looks like in practice as of 2026.

According to the CNCF’s 2026 Annual Survey, 87% of organizations are now running containerized workloads in production, up from 67% in 2022. More tellingly, organizations that fully adopted cloud native design principles reported 43% faster time-to-market and 31% reduction in infrastructure costs compared to lift-and-shift cloud adopters. The gap between “we use the cloud” and “we are cloud native” is measurable, and it’s significant.

The Core Design Principles — Let’s Break Them Down

1. Design for Failure (Not Just Against It)

This is the principle that trips up teams coming from traditional infrastructure backgrounds. In classical architecture, you design to prevent failure. In cloud native, you design assuming failure is inevitable and continuous. Netflix’s Chaos Engineering practice — deliberately injecting failures into production — is the most famous example, but in 2026, this mindset has become table stakes. Tools like Chaos Mesh and LitmusChaos have made chaos engineering accessible even to smaller engineering teams.

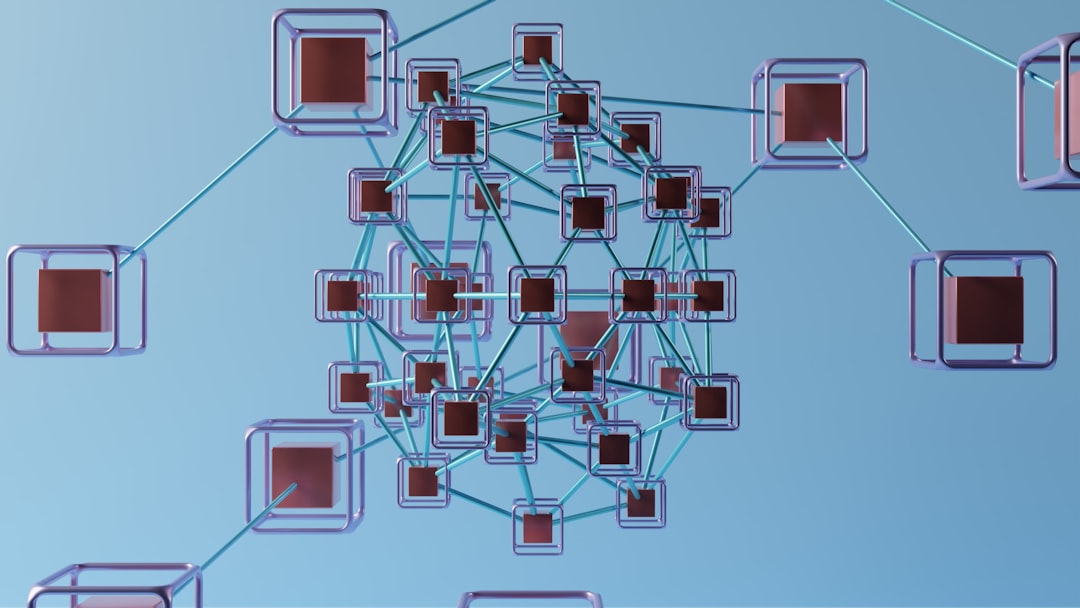

2. Loose Coupling and High Cohesion

Each service should do one thing well (high cohesion) and communicate with other services through well-defined, stable interfaces (loose coupling). The practical implication? If you need to deploy Service A, Service B should have absolutely no idea it happened. If a change in one service requires coordinated deployments across three others, you have a distributed monolith — not a microservices architecture.

3. API-First Design

Before writing implementation code, define the contract. OpenAPI Specification 3.1 and AsyncAPI 3.0 have become the dominant standards in 2026 for synchronous and event-driven APIs respectively. This principle enables parallel development across teams and makes your system’s boundaries explicit from day one.

4. Observability as a First-Class Citizen

The classic monitoring question was “Is the system up?” Cloud native observability asks “Why is the system behaving this way?” This means instrumenting your code for the three pillars: metrics (what is happening), logs (what happened), and traces (why it happened and where time was spent). The OpenTelemetry project, now at version 2.x, has largely standardized how teams collect and export this data.

5. Immutable Infrastructure

Servers and containers should never be modified after deployment. If something needs to change, you build a new image and deploy it. This eliminates the dreaded “configuration drift” problem where production environments slowly diverge from what was originally deployed.

6. Declarative Configuration and GitOps

Your infrastructure and application configuration should describe the desired state, not the steps to get there. Kubernetes is built entirely on this principle. GitOps — using Git as the single source of truth for both application code and infrastructure config — has matured enormously, with tools like Flux and ArgoCD now handling some of the world’s most complex deployments.

7. Stateless Services Where Possible

Services should not hold session state internally. State belongs in purpose-built stores (databases, caches, message queues). This is what enables horizontal scaling — if any instance of your service can handle any request, you can spin up 10 or 10,000 of them transparently.

Real-World Examples: Who’s Getting This Right in 2026?

Kakao (South Korea): After experiencing a catastrophic data center fire in 2022 that took services offline for over 127 hours, Kakao undertook one of the most publicized cloud native rebuilds in Asia. By 2025, they had re-architected their core messaging platform across multi-region active-active deployments using a cell-based architecture — a cloud native pattern that isolates failure to a specific “cell” of users rather than taking down the entire system. Their current RTO (Recovery Time Objective) for regional failures is under 90 seconds.

Shopify (Canada/Global): Shopify’s journey to cloud native is a masterclass in incremental migration. Rather than a big-bang rewrite, they decomposed their Rails monolith service by service over several years, using the “strangler fig” pattern. By 2026, their platform processes over 6 million requests per second at peak, with individual microservices scaling independently during events like BFCM (Black Friday/Cyber Monday).

Toss (South Korea): The fintech super-app has become a regional reference architecture for cloud native financial services. Their use of event sourcing and CQRS (Command Query Responsibility Segregation) patterns — both advanced cloud native design choices — allows them to maintain a complete, immutable audit log of every financial transaction while still serving read-heavy dashboards at millisecond response times.

The Building Blocks: A Practical Checklist

- Containerization: Package applications and their dependencies in containers (Docker remains dominant, but containerd is increasingly used directly)

- Container Orchestration: Kubernetes for workload scheduling, scaling, and self-healing (managed options: GKE, EKS, AKS)

- Service Mesh: Istio or Cilium for service-to-service communication, security (mTLS), and traffic management

- CI/CD Pipelines: Automated build, test, and deployment — GitHub Actions, Tekton, and ArgoCD are dominant in 2026

- Distributed Tracing: OpenTelemetry + Jaeger or Grafana Tempo to understand request flows across services

- Event Streaming: Apache Kafka or Redpanda for decoupled, asynchronous communication between services

- Secrets Management: HashiCorp Vault or cloud-native equivalents (AWS Secrets Manager, GCP Secret Manager) — never hardcode credentials

- Policy as Code: Open Policy Agent (OPA) or Kyverno for enforcing governance rules at the infrastructure level

Where Teams Commonly Go Wrong (And How to Think About It Differently)

The most common mistake I see in 2026 is microservices-as-a-default. Teams hear “cloud native” and immediately start splitting everything into tiny services — before they even understand their domain boundaries. This almost always produces a distributed monolith that’s harder to operate than the original system.

A better mental model: start with a well-structured modular monolith. Use clear internal module boundaries. Deploy it on cloud infrastructure using containers and proper CI/CD. Then, when you have genuine scaling bottlenecks or team autonomy needs that a monolith can’t accommodate, extract services based on real data. This is the “modular monolith first” approach advocated by architects like Sam Newman and Martin Fowler, and it’s far more pragmatic for teams under 50 engineers.

Realistic Alternatives Based on Your Situation

Not every team needs the full cloud native stack on day one. Here’s how I’d think about it based on where you are:

Early-stage startup (under 10 engineers): Focus on containerization, a managed Kubernetes service (like GKE Autopilot), and solid CI/CD. Don’t introduce a service mesh yet — the operational overhead isn’t worth it. A single well-structured service with good observability beats a poorly designed microservices architecture every time.

Growth-stage company (10-100 engineers): This is where domain-driven design becomes essential for identifying service boundaries. Invest in a platform engineering team dedicated to the internal developer experience. GitOps with ArgoCD will pay dividends quickly at this stage.

Enterprise (100+ engineers): Multi-cluster Kubernetes, cell-based architecture for fault isolation, and mature FinOps practices become critical. The tooling complexity is real — budget for it, both financially and in terms of engineering headcount.

The honest truth is that cloud native architecture is not a destination — it’s a continuous practice. The principles don’t change, but the tools and your implementation will evolve as your system and organization grow. The teams that succeed are the ones who internalize the why behind each principle, not just the what.

Editor’s Comment: If there’s one thing to take away from all of this, it’s that cloud native is fundamentally about optionality — building systems that can change, scale, and recover without heroic manual effort. The best architecture decision you can make today is the one that keeps your options open tomorrow. Start small, instrument everything from the beginning, and let real usage data drive your decomposition decisions. The teams that are winning in 2026 aren’t the ones with the most sophisticated toolchain — they’re the ones who deeply understand their problem domain and apply these principles with discipline and intentionality.

태그: [‘cloud native architecture’, ‘microservices design principles’, ‘Kubernetes 2026’, ‘cloud native development’, ‘distributed systems design’, ‘GitOps best practices’, ‘platform engineering’]