Picture this: it’s early 2026, and a chip the size of your thumbnail is quietly orchestrating everything from real-time medical diagnostics to autonomous vehicle navigation — all while sipping power like a hummingbird rather than guzzling it like a jet engine. That’s not science fiction anymore. That’s the current state of AI semiconductor technology, and honestly, it’s moving faster than most of us can comfortably track.

I’ve been following this space closely, and what strikes me most isn’t just the raw performance numbers — it’s the philosophy behind how engineers are rethinking what a chip should actually do. Let’s dig into what’s really happening on the silicon frontier in 2026.

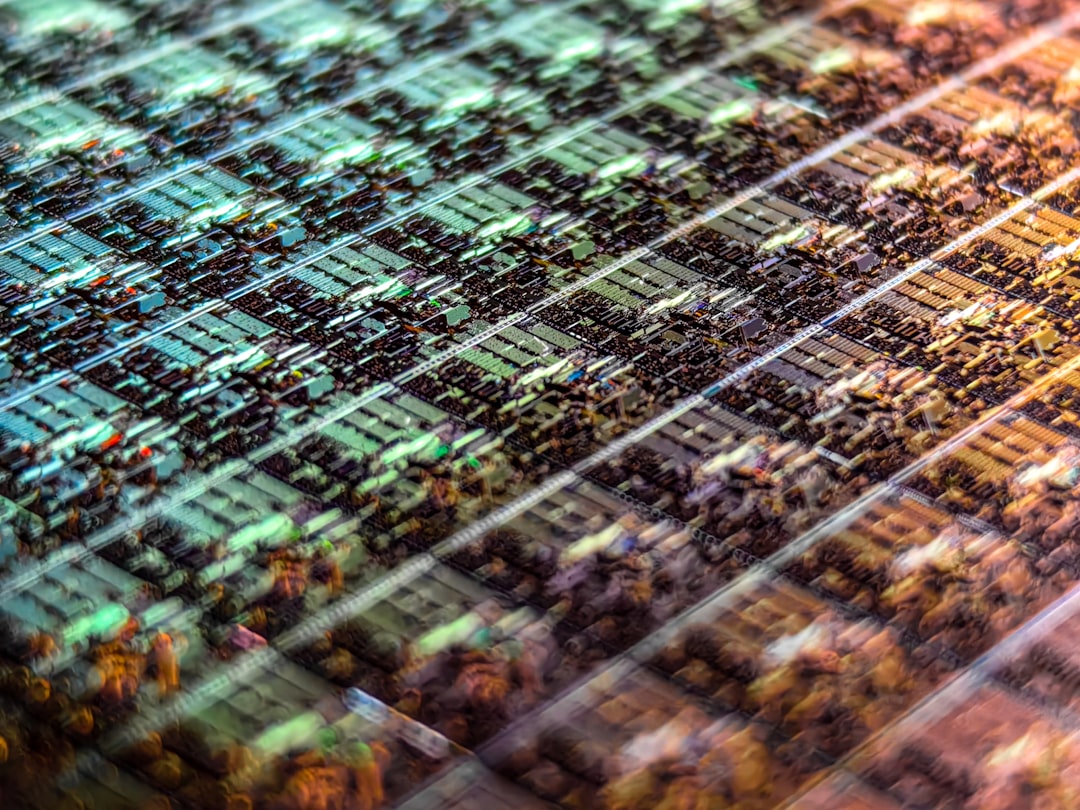

1. The 2nm Era Has Officially Arrived — And It’s Complicated

By early 2026, TSMC’s N2 (2-nanometer class) process has moved from limited production into broader commercial deployment, with Samsung’s SF2 node following closely behind. But here’s the thing — the jump from 3nm to 2nm isn’t just about cramming more transistors onto a wafer. It’s about Gate-All-Around (GAA) transistor architecture becoming the dominant design paradigm.

GAA transistors wrap the gate material around all four sides of the channel, giving engineers far better control over electron flow than the old FinFET design. The practical result? We’re looking at roughly 15–20% performance gains alongside 25–30% power efficiency improvements compared to equivalent 3nm chips. For AI workloads — which are notoriously power-hungry — that’s genuinely transformative.

NVIDIA’s Blackwell Ultra architecture, now shipping in volume, leverages these process advances to deliver inference performance that would have required a small data center just three years ago. Meanwhile, AMD’s MI400 series chips are pushing the boundaries of HBM4 memory bandwidth, which has become the critical bottleneck for large language model inference.

2. Memory Bandwidth: The Unsung Hero (and Bottleneck) of AI Chips

Here’s something that doesn’t get enough attention in mainstream coverage: raw compute power means almost nothing if your chip is starving for data. This is the so-called “memory wall,” and in 2026, it’s become the central battlefield of AI hardware design.

SK Hynix began mass production of HBM4 (High Bandwidth Memory 4th generation) in late 2025, offering peak bandwidth exceeding 2 TB/s per stack — roughly double what HBM3e delivered. This matters enormously because transformer-based AI models spend most of their time shuffling weights and activations between compute units and memory, not actually computing.

Micron and Samsung are countering with innovations in Compute-in-Memory (CiM) architectures, where certain mathematical operations happen directly within the memory array itself, bypassing the bandwidth bottleneck entirely. Early commercial implementations are showing promising results for specific inference tasks, though training large models still relies on conventional approaches.

3. Domestic and International Landscape: Who’s Winning, Who’s Catching Up

Let’s be honest about the global picture, because it’s genuinely nuanced:

- United States: NVIDIA remains the dominant force in AI training silicon, but Intel’s Gaudi 3 Ultra has carved out real market share in cloud inference, particularly among cost-sensitive deployments. The CHIPS Act investments are starting to show tangible results, with Intel’s Ohio fab expanding capacity significantly.

- South Korea: Samsung and SK Hynix continue to hold commanding positions in HBM memory — arguably the most strategically important component in the AI chip stack right now. Samsung’s foundry division (Samsung Foundry) is aggressively courting AI chip startups looking for alternatives to TSMC’s increasingly backlogged capacity.

- Taiwan: TSMC’s competitive moat remains formidable. Their CoWoS (Chip-on-Wafer-on-Substrate) advanced packaging technology is currently the only production-ready solution capable of integrating the massive die stacks that cutting-edge AI accelerators require. Demand continues to outpace capacity well into 2026.

- China: Despite export restrictions limiting access to leading-edge nodes, companies like Cambricon and Biren Technology are iterating rapidly on 7nm-class designs optimized for domestic cloud AI workloads. The gap is real but not insurmountable for inference-focused applications.

- Startups to watch: Groq (inference-focused LPU architecture), Cerebras (wafer-scale computing), and Tenstorrent (RISC-V based AI cores) are all shipping commercial hardware in 2026 and challenging conventional GPU assumptions for specific workloads.

4. The Efficiency Revolution: Edge AI Chips Are Growing Up

Not every AI application needs a data center. In fact, some of the most exciting semiconductor innovation happening right now is targeting the edge — meaning devices that run AI locally without constant cloud connectivity.

Apple’s M5 series chips (introduced in late 2025) demonstrated that a Neural Processing Unit (NPU) integrated into a consumer chip could handle surprisingly sophisticated on-device AI tasks, from real-time language translation to complex image understanding. Qualcomm’s Snapdragon 8 Elite 2 follows a similar philosophy for mobile platforms, with dedicated AI acceleration claiming up to 50 TOPS (Tera Operations Per Second) for on-device inference.

What’s particularly interesting here is the software-hardware co-design trend. Chip architects are increasingly designing silicon in close collaboration with AI framework teams — essentially asking “what mathematical patterns show up most often in modern neural networks?” and then building custom silicon pathways for exactly those patterns. It’s a fundamentally different approach from the general-purpose GPU strategy that dominated the last decade.

5. Realistic Considerations: What This Means If You’re Not a Chip Engineer

Fair point — most of us aren’t designing silicon. So what does this wave of innovation actually mean in practical terms?

- For businesses: Cloud AI inference costs are dropping meaningfully as more efficient chips reach market. If you shelved an AI application idea because the API costs were prohibitive in 2024, it’s worth revisiting the economics in 2026.

- For developers: The proliferation of capable NPUs in consumer devices means on-device inference is increasingly viable. Consider whether your application genuinely needs cloud connectivity or whether local processing could offer better privacy and lower latency.

- For investors and strategists: The memory supply chain — particularly HBM — remains a chokepoint worth understanding. Companies that control HBM capacity have unusual leverage in the AI infrastructure stack.

- For curious learners: If you want to understand this space more deeply, start with the basics of how transformer models work computationally — because almost every architectural decision in modern AI chips is optimized around transformer workloads.

The semiconductor industry has always operated on cycles of breathtaking ambition followed by hard engineering reality checks. What feels different in 2026 is the alignment of incentives — massive capital investment, geopolitical urgency, and genuine commercial demand are all pushing in the same direction simultaneously. That combination tends to produce remarkable things.

The honest alternative perspective worth holding onto: not every application needs the bleeding edge. A well-optimized model running on 5nm hardware from two years ago can still accomplish extraordinary things. The chip race matters enormously at the frontier, but most real-world AI deployment decisions involve more mundane tradeoffs around cost, reliability, and developer tooling than pure silicon performance.

Editor’s Comment : What genuinely excites me about the 2026 AI semiconductor landscape isn’t any single chip or benchmark — it’s the conceptual diversity. We’re seeing radically different bets on architecture, memory design, and packaging technology all competing simultaneously. History suggests that periods of architectural pluralism like this one tend to produce the unexpected breakthroughs that define the next decade. Stay curious, and don’t let any single narrative about “who’s winning” substitute for understanding the actual engineering tradeoffs at play.

태그: [‘AI semiconductor 2026’, ‘AI chip technology trends’, ‘TSMC 2nm GAA’, ‘HBM4 memory bandwidth’, ‘edge AI chips’, ‘NVIDIA Blackwell Ultra’, ‘AI hardware innovation’]

Leave a Reply