A few months back, I was on a video call with a team lead at a fintech startup who’d just survived a brutal incident — their monolithic payment service had gone completely dark during a flash sale event, taking down 40,000 concurrent users with it. She said something that stuck with me: “We kept reading about cloud native, but we thought it was just a buzzword for big companies.” Three hours of downtime, an estimated $280K in lost revenue, and a very unhappy CTO later — yeah, it’s not just a buzzword.

That conversation sent me down a rabbit hole of revisiting everything I’ve accumulated over a decade of building, breaking, and rebuilding distributed systems. Cloud native application design isn’t a single technique — it’s a philosophy. And honestly, it’s one that rewards you only when you’ve felt the pain of not following it. Let’s dig in together.

What “Cloud Native” Actually Means (Beyond the Marketing Fluff)

The Cloud Native Computing Foundation (CNCF) defines cloud native as a set of practices that enable organizations to build and run scalable applications in modern dynamic environments — think public, private, and hybrid clouds. But what does that mean on the ground? In 2026, the CNCF landscape now tracks over 1,400 projects and tools, up from around 1,100 in 2023. That’s both exciting and terrifying.

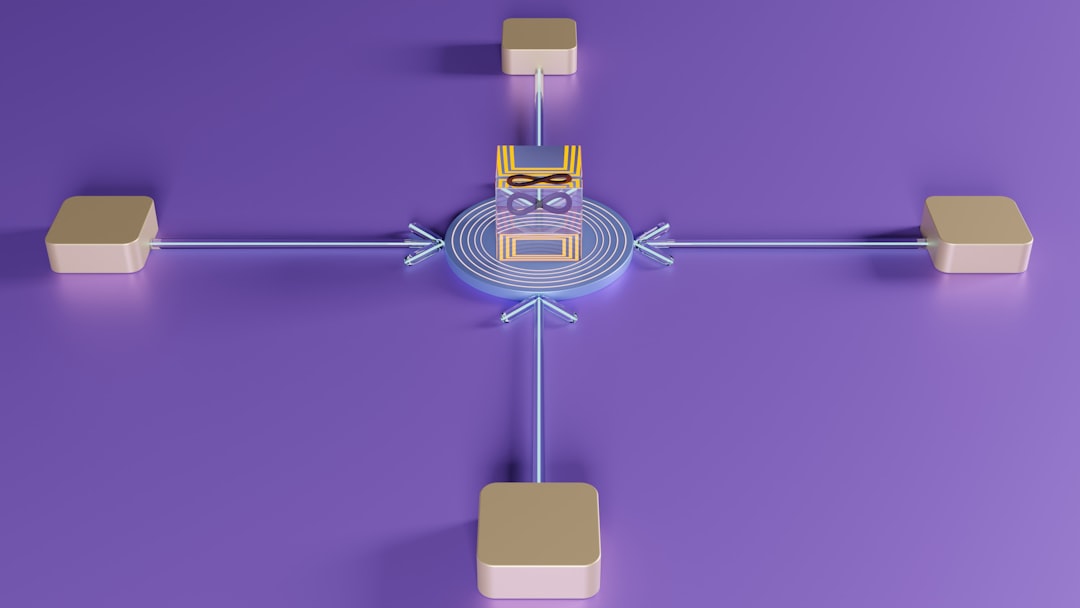

At its core, cloud native design rests on five foundational pillars:

- Microservices Architecture: Decomposing your application into small, independently deployable services — each owning its own data and business logic.

- Containerization: Packaging apps and their dependencies into containers (Docker being the canonical example) so behavior is consistent across every environment.

- Dynamic Orchestration: Using systems like Kubernetes to automate deployment, scaling, and management of containerized workloads.

- DevOps & CI/CD Pipelines: Tightening the feedback loop between development and operations through automation — deploy fast, fail fast, recover faster.

- Observability by Design: Treating logs, metrics, and distributed traces not as afterthoughts but as first-class citizens baked into your architecture from day one.

According to a 2026 Gartner report, by the end of this year, over 75% of new enterprise workloads will be deployed on cloud native platforms — up from 65% in 2023. The train has clearly left the station.

The 12-Factor App — Still Relevant, But Now We’re at 15

If you’ve been in the cloud native space for any length of time, you’ve heard of the Twelve-Factor App methodology — originally articulated by Heroku engineers. In 2026, this foundation remains solid, but the community has informally extended it with three additional factors driven by modern platform realities:

- Factor 13 — API-First Design: Every service exposes well-documented, versioned APIs. No sneaky internal coupling.

- Factor 14 — Security as Code: Zero-trust networking, secrets management (think HashiCorp Vault or AWS Secrets Manager), and RBAC policies defined in code, not in tickets.

- Factor 15 — Cost Observability: FinOps principles baked into the design — tagging resources, tracking per-service costs, and alerting on budget anomalies. In 2026, cloud waste is estimated at $147 billion globally annually (Flexera State of the Cloud Report 2026). That’s not a rounding error.

Real-World War Story: The Database Bottleneck Nobody Saw Coming

Here’s one from my own notebook. Around 2023, I was consulting for a SaaS logistics company that had “gone cloud native” — or so they thought. They’d containerized everything, had Kubernetes running beautifully, CI/CD pipelines humming. But they had one fatal flaw: every single microservice was hitting the same shared PostgreSQL instance.

When Black Friday traffic hit, the DB became the single point of failure. Services that were perfectly horizontally scalable at the compute layer had built a concrete wall at the data layer. We ended up doing emergency read-replica provisioning and caching layers at 2 AM — not fun. The lesson? Each microservice should own its own datastore. Yes, this creates eventual consistency challenges, but that’s a design problem worth solving upfront, not a crisis worth solving at midnight.

This is sometimes called the “Database-per-Service” pattern, and paired with event sourcing or CQRS (Command Query Responsibility Segregation), it gives you genuine decoupling.

Global Case Studies Worth Studying

Let’s ground this in real-world examples that illustrate these principles at scale:

- Netflix: The OG cloud native case study. Netflix runs on AWS with thousands of microservices, using Chaos Engineering (their famous Chaos Monkey) to proactively inject failures in production. In 2026, they’ve evolved this into full “resilience simulation” pipelines. Their approach to circuit breakers and fallback patterns via Hystrix (now largely replaced by Resilience4j) is textbook material.

- Kakao (South Korea): Korea’s super-app platform Kakao manages over 50 million active users through a hybrid cloud native architecture combining on-premise bare metal with public cloud burst capacity via Kubernetes federation. Their 2024 outage (which affected 54 million users when a fire hit a data center) directly catalyzed their current multi-region, active-active design that’s considered a benchmark in Asia-Pacific cloud resilience planning.

- Shopify: By late 2025, Shopify had migrated their core commerce engine from a Rails monolith to a set of domain-driven microservices, achieving a 40% improvement in deployment frequency. Their engineering blog is a goldmine — search for their “Deconstructing the Monolith” series.

- LINE Corporation (Japan/Korea): LINE’s messaging infrastructure serves hundreds of millions of users across Asia using a heavily Kubernetes-native stack. Their investment in internal developer platforms (IDPs) — essentially building a PaaS on top of Kubernetes — reduced time-to-deploy for new services from weeks to hours.

The Observability Imperative: You Can’t Fix What You Can’t See

One principle I’ve seen teams consistently underinvest in is observability. It’s not glamorous — it doesn’t ship features — but it’s the difference between a 5-minute incident and a 5-hour war room. The OpenTelemetry project (CNCF-backed) has become the de facto standard in 2026 for instrumenting distributed systems with traces, metrics, and logs in a vendor-agnostic way.

The practical checklist I now include in every architecture review:

- Distributed tracing implemented (Jaeger, Tempo, or commercial equivalent like Datadog APM)

- Structured logging with correlation IDs linking requests across service boundaries

- Service Level Objectives (SLOs) defined — not just uptime, but latency percentiles (p99, p999)

- Alerting based on symptom signals (error rate, latency degradation) rather than cause signals (CPU > 80%)

- Runbooks linked directly from alerts — because at 3 AM, nobody should be guessing

Realistic Alternatives: Not Every Team Should Go Full Kubernetes Tomorrow

Here’s where I want to push back a little on the “cloud native or bust” narrative. If you’re a five-person startup shipping your first product, pulling in the full CNCF stack on day one is a recipe for yak-shaving yourself into oblivion. Kubernetes has very real operational overhead — CNCF’s own survey in 2026 shows that 38% of teams cite “operational complexity” as their top challenge with Kubernetes adoption.

Realistic on-ramps for smaller teams:

- Start with managed platforms: AWS App Runner, Google Cloud Run, or Railway.app give you container-native deployment without managing the control plane. You’re still cloud native in spirit.

- Strangler Fig pattern: Instead of rewriting your monolith overnight, extract services one capability at a time — new features go into new services, old features get migrated incrementally. Martin Fowler’s writing on this is essential reading.

- Modular monolith first: A well-structured monolith with clean domain boundaries is infinitely easier to split later than a tangled ball of mud. Don’t mistake “microservices” for “good architecture” — they’re not synonymous.

Cloud native is a destination, not an all-or-nothing switch. The principles — resilience, scalability, observability, automation — can be applied at any scale. Your job is to identify which principles unlock the most value at your current stage.

The companies that win aren’t necessarily the ones with the most sophisticated architecture. They’re the ones who match their architectural complexity to their organizational maturity — and keep improving incrementally, with intention.

Editor’s Comment : After a decade of watching teams over-engineer and under-engineer their way into trouble, my honest take is this — cloud native application design is less about technology and more about culture and feedback loops. The tools are mature, the patterns are documented, the case studies are abundant. The hard part is building a team that treats reliability as a feature, infrastructure as code, and failure as a learning opportunity rather than a catastrophe. Start with the principles, pick your tools to match your constraints, and resist the urge to adopt every shiny new framework in the CNCF landscape at once. Your future on-call self will thank you.

📚 관련된 다른 글도 읽어 보세요

- How to Integrate DevOps with Software Engineering in 2026: A Practical Roadmap for Modern Teams

- 엣지 컴퓨팅 vs 클라우드 컴퓨팅 2026년 완벽 비교: 어떤 걸 선택해야 할까?

- 6G Technology in 2026: Where Are We Now and What’s Coming Next?

태그: cloud native application design, microservices architecture, kubernetes best practices, 12 factor app, cloud native 2026, distributed systems design, DevOps principles