Picture this: It’s 2 AM, and your e-commerce platform just crashed because 50,000 users simultaneously tried to check out during a flash sale. The monolithic backend choked, queues backed up, and your team spent the next six hours firefighting. Sound familiar? This exact scenario — played out at companies like early-stage Shopify clones and mid-size retail platforms — is precisely what pushed the software industry toward Event-Driven Architecture (EDA).

In 2026, EDA has moved from being a “nice to have” for tech giants to a practical necessity for any team building scalable, resilient systems. Let’s walk through what it actually looks like when you implement it — not in textbook diagrams, but in real, messy, production-grade code and systems.

What Is Event-Driven Architecture, Really?

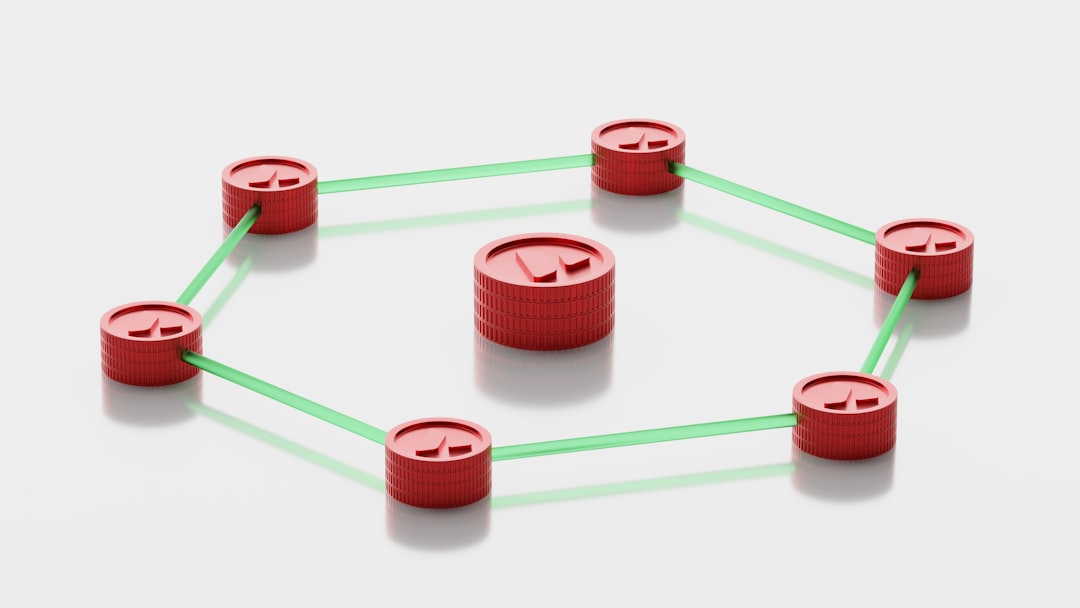

At its core, EDA is a design paradigm where system components communicate by producing and consuming events — discrete records of things that happened. Instead of Service A directly calling Service B (tight coupling), Service A emits an event like OrderPlaced, and any interested service — inventory, billing, notification — reacts independently.

The three key components you’ll always encounter:

- Event Producers: Services that detect a state change and publish an event (e.g., a payment service emitting

PaymentSucceeded). - Event Brokers: The middleware that routes events — Apache Kafka, AWS EventBridge, Google Pub/Sub, or RabbitMQ are the most widely used in 2026.

- Event Consumers: Services that subscribe to relevant events and act on them asynchronously.

Real-World Example #1: E-Commerce Order Processing Pipeline

Let’s build this out conceptually. Imagine a mid-size online retailer — think of a company like Coupang’s smaller competitor or a European fashion startup — processing 10,000 orders per day.

Traditional (problematic) approach: The Order Service synchronously calls Inventory, then Payment, then Notification in sequence. One timeout cascades into a complete order failure.

EDA approach: The Order Service does one job — it persists the order and publishes OrderCreated to Kafka. Then:

- The Inventory Service consumes

OrderCreated→ reserves stock → emitsStockReserved - The Payment Service consumes

StockReserved→ charges the card → emitsPaymentSucceededorPaymentFailed - The Notification Service consumes either outcome → sends the appropriate email or SMS

- The Analytics Service consumes everything → updates dashboards in near real-time

The critical insight here? If the Notification Service goes down at midnight, orders still process. The notification just gets delivered when the service recovers, because Kafka durably stores the unconsumed events. That’s resilience by design, not by heroics.

Real-World Example #2: Fintech — Fraud Detection at Scale

One of the most compelling EDA use cases in 2026 comes from the fintech sector. Kakao Pay in South Korea and Stripe internationally have both publicly discussed event-driven fraud detection pipelines. Here’s the pattern:

Every transaction triggers a TransactionInitiated event. Multiple fraud-detection consumers evaluate it in parallel — rule-based engines check velocity (too many transactions in 60 seconds?), ML models score risk in real-time, and geo-anomaly detectors flag location mismatches. Because these run concurrently rather than sequentially, the entire fraud check completes in under 200ms without blocking the transaction flow.

A particularly interesting implementation detail: these systems use event sourcing alongside EDA. Every state change is stored as an immutable event log, giving compliance teams a perfect audit trail — critical under regulations like Korea’s Electronic Financial Transactions Act and Europe’s PSD3 framework (updated in early 2026).

Implementation Walkthrough: Setting Up with AWS EventBridge (2026 Stack)

For teams not ready to manage Kafka clusters, AWS EventBridge remains an excellent managed starting point. Here’s a simplified implementation pattern:

- Step 1 — Define your event schema: Use JSON Schema or AWS EventBridge Schema Registry. A poorly defined schema is the #1 cause of EDA headaches. Spend time here.

- Step 2 — Create an Event Bus: Custom event buses isolate your domain events from AWS service events. One bus per bounded context (Orders, Inventory, Users) is a clean starting pattern.

- Step 3 — Set up Event Rules: Rules filter which events route to which targets. For example, route all

PaymentFailedevents to both your refund Lambda AND your alerting SNS topic. - Step 4 — Implement idempotency in consumers: Events can occasionally be delivered more than once. Your consumers MUST handle duplicate events gracefully — use idempotency keys or check-and-set operations in your database.

- Step 5 — Implement Dead Letter Queues (DLQs): Failed event processing should never silently disappear. Route failed events to SQS DLQs for manual inspection or automated retry logic.

The Challenges Nobody Talks About Enough

Let’s be honest — EDA isn’t a silver bullet, and I’d be doing you a disservice to pretend otherwise. Here are the realistic friction points:

- Debugging complexity: Tracing a bug across 6 asynchronous services is significantly harder than stepping through synchronous code. Distributed tracing tools like OpenTelemetry (now deeply integrated into most cloud platforms in 2026) are non-negotiable.

- Eventual consistency: Your inventory might briefly show an item as available while payment is processing. You need to design your UI and business logic around this reality, not fight it.

- Schema evolution: When your

OrderCreatedevent needs a new field, you need backward-compatible versioning strategies. Consumer-driven contract testing (tools like Pact) helps enormously here. - Operational overhead: Running Kafka means managing brokers, partitions, consumer groups, and lag monitoring. If your team is under 10 engineers, managed services (EventBridge, Pub/Sub, Confluent Cloud) are almost always the smarter starting point.

Realistic Alternatives Based on Your Situation

Here’s where I want to give you genuinely tailored advice rather than just evangelizing EDA:

- If you’re a startup with under 5 engineers: Start with a simple message queue (SQS + Lambda) for your most critical async workflow. Don’t architect for scale you don’t have yet. Extract 1-2 pain points and solve those first.

- If you’re a mid-size company with occasional traffic spikes: AWS EventBridge or Google Pub/Sub gives you 80% of EDA benefits with 20% of the operational complexity. This is the sweet spot for most teams in 2026.

- If you’re at enterprise scale with dedicated platform teams: Apache Kafka (or Confluent Platform) with proper schema registry, KSQL for stream processing, and full distributed tracing is absolutely worth the investment.

- If you just need simple background jobs: Honestly? A background job framework like Sidekiq (Ruby), Celery (Python), or BullMQ (Node.js) might be all you need. Not every async problem is an EDA problem.

The architectural decision should always be driven by your actual pain points — coupling, scalability bottlenecks, resilience failures — not by what’s trending on tech Twitter.

Editor’s Comment : Event-Driven Architecture has genuinely matured into one of the most practical tools in a modern engineer’s toolkit as of 2026. But the teams that succeed with it aren’t the ones who adopted it wholesale overnight — they’re the ones who identified a specific coupling or scalability problem, applied EDA surgically to solve it, learned the operational patterns, and then expanded thoughtfully. Start with one event, one producer, one consumer. Get that right before you redesign everything. The architecture will earn your trust, and so will your team’s confidence in operating it.

태그: [‘event-driven architecture’, ‘EDA implementation’, ‘Apache Kafka tutorial’, ‘microservices design patterns’, ‘AWS EventBridge 2026’, ‘real-time event processing’, ‘software architecture best practices’]